A Mail Delivery Mystery: Exim, systemd, setuid, and Docker, oh my! 10 Oct 5:44 PM (12 days ago)

On mail.quux, a node of NNCPNET (the NNCP-based peer-to-peer email network), I started noticing emails not being delivered. They were all in the queue, frozen, and Exim’s log had entries like:

unable to set gid=5001 or uid=5001 (euid=100): local delivery to [redacted] transport=nncp

Weird.

Stranger still, when I manually ran the queue with sendmail -qff -v, they all delivered fine.

Huh.

Well, I thought, it was a one-off weird thing. But then it happened again.

Upon investigating, I observed that this issue was happening only on messages submitted by SMTP. Which, on these systems, aren’t that many.

While trying different things, I tried submitting a message to myself using SMTP. Nothing to do with NNCP at all. But look at this:

jgoerzen@[redacted] R=userforward defer (-1): require_files: error for /home/jgoerzen/.forward: Permission denied

Strraaannnge….

All the information I could find about this, even a FAQ entry, said that the problem is that Exim isn’t setuid root. But it is:

-rwsr-xr-x 1 root root 1533496 Mar 29 2025 /usr/sbin/exim4

This problem started when I upgraded to Debian Trixie. So what changed there?

There are a lot of possibilities; this is running in Docker using my docker-debian-base system, which runs a regular Debian in Docker, including systemd.

I eventually tracked it down to Exim migrating from init.d to systemd in trixie, and putting a bunch of lockdowns in its service file. After a bunch of trial and error, I determined that I needed to override this set of lockdowns to make it work. These overrides did the trick:

ProtectClock=false PrivateDevices=false RestrictRealtime=false ProtectKernelModules=false ProtectKernelTunables=false ProtectKernelLogs=false ProtectHostname=false

I don’t know for sure if the issue is related to setuid. But if it is, there’s nothing that immediately jumps out at me about any of these that would indicate a problem with setuid.

I also don’t know if running in Docker makes any difference.

Anyhow, problem fixed, but mystery not solved!

I’m Not Very Popular, Thankfully. That Makes The Internet Fun Again 9 Oct 4:59 PM (13 days ago)

“Like and subscribe!”

“Help us get our next thousand (or million) followers!”

I was using Linux before it was popular. Back in the day where you had to write Modelines for your XF86Config file — and do it properly, or else you might ruin your monitor. Back when there wasn’t a word processor (thankfully; that forced me to learn LaTeX, which I used to write my papers in college).

I then ran Linux on an Alpha, a difficult proposition in an era when web browsers were either closed-source or too old to be useful; all sorts of workarounds, including emulating Digital UNIX.

Recently I wrote a deep dive into the DOS VGA text mode and how to achieve it on a modern UEFI Linux system.

Nobody can monetize things like this. I am one of maybe a dozen or two people globally that care about that sort of thing. That’s fine.

Today, I’m interested in things like asynchronous communication, NNCP, and Gopher. Heck, I’m posting these words on a blog. Social media displaced those, right?

Some of the things I write about here have maybe a few dozen people on the planet interested in them. That’s fine.

I have no idea how many people read my blog. I have no idea where people hear about my posts from. I guess I can check my Mastodon profile to see how many followers I have, but it’s not something I tend to do. I don’t know if the number is going up or down, or if it is all that much in Mastodon terms (probably not).

Thank goodness.

Since I don’t have to care about what’s popular, or spend hours editing video, or thousands of dollars on video equipment, I can just sit down and write about what interests me. If that also interests you, then great. If not, you can find what interests you — also fine.

I once had a colleague that was one of these “plugged into Silicon Valley” types. He would periodically tell me, with a mixture of excitement and awe, that one of my posts had made Hacker News.

This was always news to me, because I never paid a lot of attention over there. Occasionally that would bring in some excellent discussion, but more often than not, it was comments from people that hadn’t read or understood the article trying to appear smart by arguing with what it — or rather, what they imagined it said, I guess.

The thing I value isn’t subscriber count. It’s discussion. A little discussion in the comments or on Mastodon – that’s perfect, even if only 10 people read the article. I have the most fun in a community.

And I’ll go on writing about NNCP and Gopher and non-square DOS pixels, with audiences of dozens globally. I have no advertisers to keep happy, and I enjoy it, so why not?

A Twisty Maze of Ill-Behaved Bots 1 Oct 7:01 PM (21 days ago)

Like many, bot traffic has been causing significant issues for my hosted server recently. I’ve been noticing a dramatic increase in bots that do not respect robots.txt, especially the crawl-delay I have set there. Not only that, but many of them are sending user-agent strings that are quite precisely matching what desktop browsers send. That is, they don’t identify themselves.

They posed a particular problem on two sites: my blog, and the lists.complete.org archives.

The list archives is a completely static site, but it has many pages, so the bots that are ill-behaved absolutely hammer it following links.

My blog runs WordPress. It has fewer pages, but by using PHP, doesn’t need as many hits to start to bog down. Also, there is a Mastodon thundering herd problem, and since I participate on Mastodon, this hits my server.

The solution was one of layers.

I had already added a crawl-delay line to robots.txt. It helped a bit, but many bots these days aren’t well-behaved. Next, I added WP Super Cache to my WordPress installation. I also enabled APCu in PHP and installed APCu Manager. Again, each step helped. Again, not quite enough.

Finally, I added Anubis. Installing it (especially if using the Docker container) was under-documented, but I figured it out. By default, it is designed to block AI bots and try to challenge everything with “Mozilla” in its user-agent (which is most things) with a Javascript challenge.

That’s not quite what I want. If a bot is well-behaved, AI or otherwise, it will respect my robots.txt and I can more precisely control it there. Also, I intentionally support non-Javascript browsers on many of the sites I host, so I wanted to be judicious. Eventually I configured Anubis to only challenge things that present a user-agent that looks fully like a real browser. In other words, real browsers should pass right through, and bad bots pretending to be real browsers will fail.

That was quite effective. It reduced load further to the point where things are ordinarily fairly snappy.

I had previously been using mod_security to block some bots, but it seemed to be getting in the way of the Fediverse at times. When I disabled it, I observed another increase in speed. Anubis was likely going to get rid of those annoying bots itself anyhow.

As a final step, I migrated to a faster hosting option. This post will show me how well it survives the Mastodon thundering herd!

Update: Yes, it handled it quite nicely now.

Running an Accurate 80×25 DOS-Style Console on Modern Linux Is Possible After All 18 Sep 4:58 AM (last month)

Here, in classic Goerzen deep dive fashion, is more information than you knew you wanted about a topic you’ve probably never thought of. I found it pretty interesting, because it took me down a rabbit hole of subsystems I’ve never worked with much and a mishmash of 1980s and 2020s tech.

I had previously tried and failed to get an actual 80x25 Linux console, but I’ve since figured it out!

This post is about the Linux text console – not X or Wayland. We’re going to get the console right without using those systems. These instructions are for Debian trixie, but should be broadly applicable elsewhere also. The end result can look like this:

(That’s a Wifi Retromodem that I got at VCFMW last year in the Hayes modem case)

What’s a pixel?

How would you define a “pixel” these days? Probably something like “a uniquely-addressable square dot in a two-dimensional grid”.

In the world of VGA and CRTs, that was just a logical abstraction. We got an API centered around that because it was convenient. But, down the VGA cable and on the device, that’s not what a pixel was.

A pixel, back then, was a time interval. On a multisync monitor, which were common except in the very early days of VGA, the timings could be adjusted which produced logical pixels of different sizes. Those screens often had a maximum resolution but not necessarily a “native resolution” in the sense that an LCD panel does. Different timings produced different-sized pixels with equal clarity (or, on cheaper monitors, equal fuzziness).

A side effect of this was that pixels need not be square. And, in fact, in the standard DOS VGA 80x25 text mode, they weren’t.

You might be seeing why DVI, DisplayPort, and HDMI replaced VGA for LCD monitors: with a VGA cable, you did a pixel-to-analog-timings conversion, then the display did a timings-to-pixels conversion, and this process could be a bit lossy. (Hence why you sometimes needed to fill the screen with an image and push the “center” button on those older LCD screens)

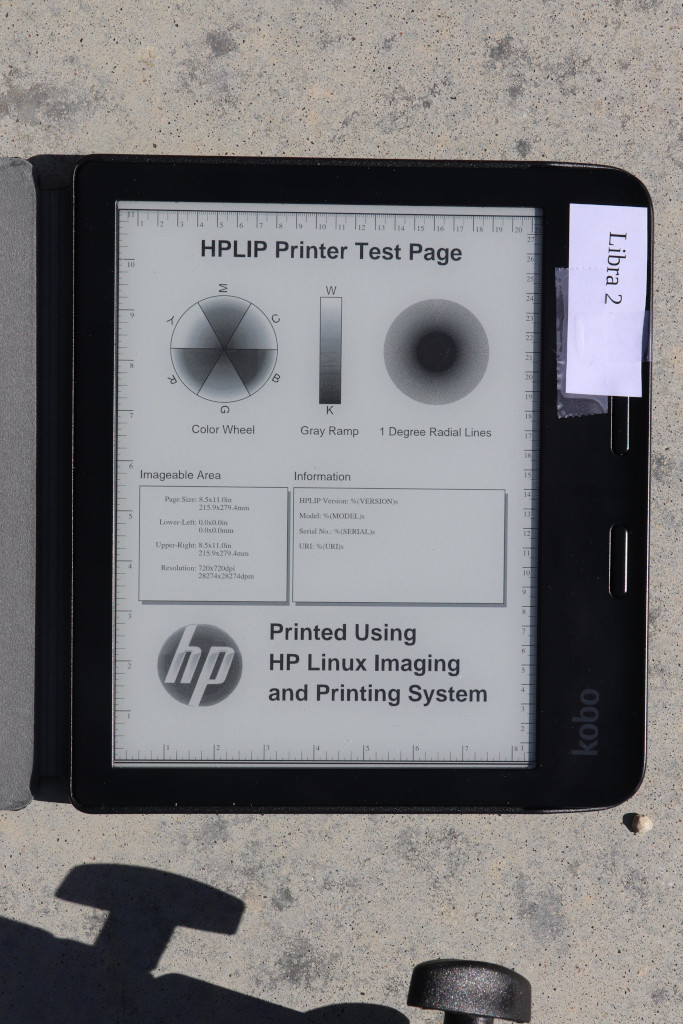

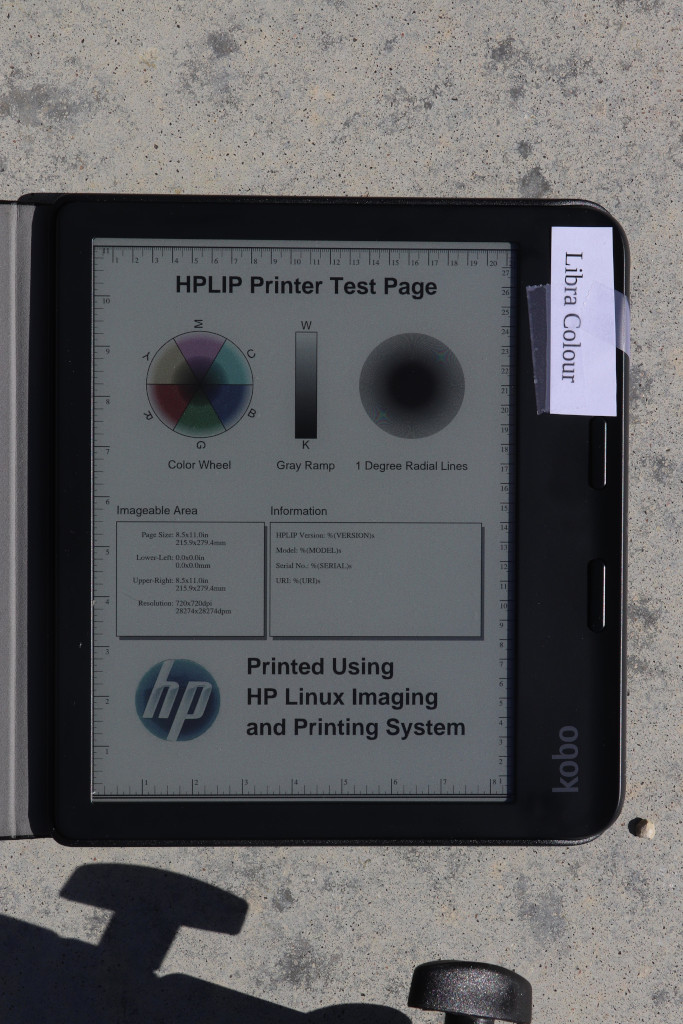

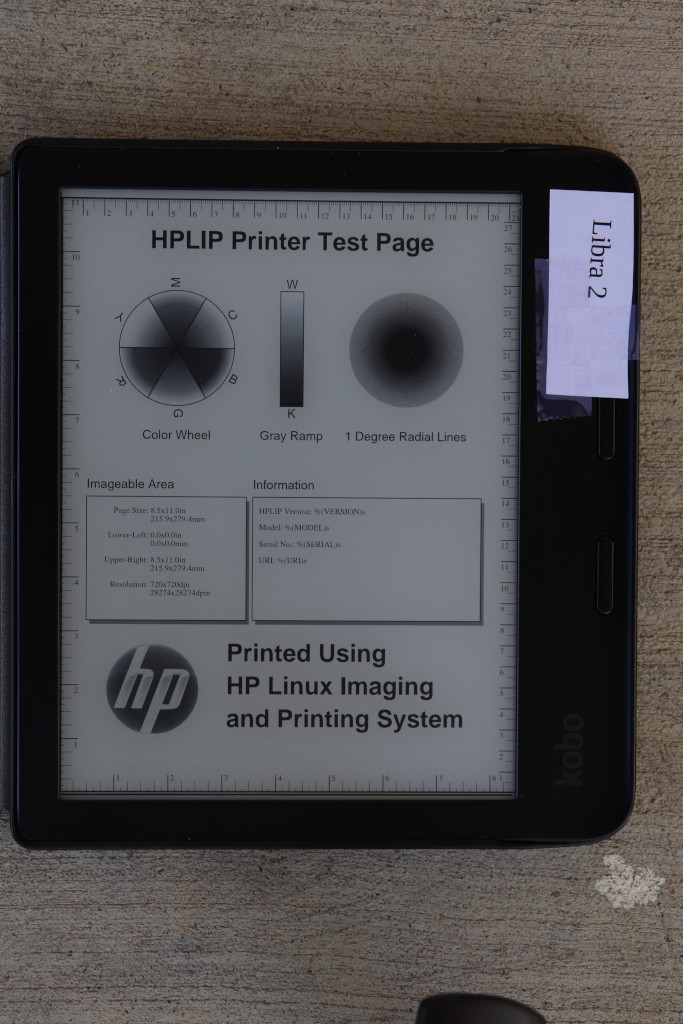

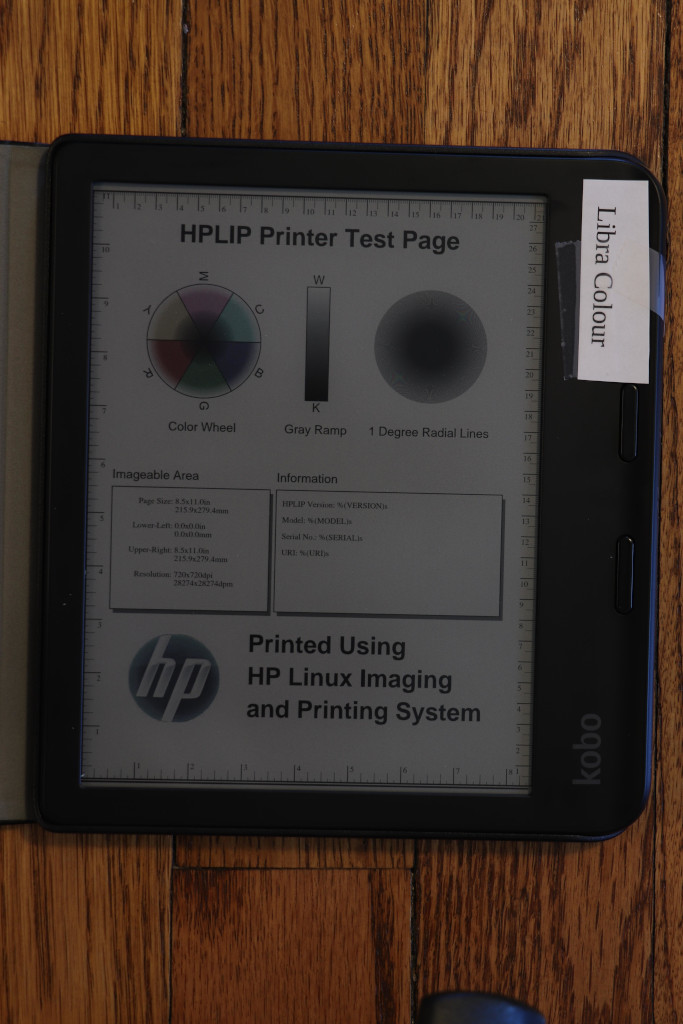

(Note to the pedantically-inclined: yes I am aware that I have simplified several things here; for instance, a color LCD pixel is made up of approximately 3 sub-dots of varying colors, and that things like color eInk displays have two pixel grids with different sizes of pixels layered atop each other, and printers are another confusing thing altogether, and and and…. MOST PEOPLE THINK OF A PIXEL AS A DOT THESE DAYS, OK?)

What was DOS text mode?

We think of this as the “standard” display: 80 columns wide and 25 rows tall. 80x25. By the time Linux came along, the standard Linux console was VGA text mode – something like the 4th incarnation of text modes on PCs (after CGA, MDA, and EGA). VGA also supported certain other sizes of characters giving certain other text dimensions, but if I cover all of those, this will explode into a ridiculously more massive page than it already is.

So to display text on an 80x25 DOS VGA system, ultimately characters and attributes were written into the text buffer in memory. The VGA system then rendered it to the display as a 720x400 image (at 70Hz) with non-square pixels such that the result was approximately a 4:3 aspect ratio.

The font used for this rendering was a bitmapped one using 8x16 cells. You might do some math here and point out that 8 * 80 is only 640, and you’d be correct. The fonts were 8x16 but the rendered cells were 9x16. The extra pixel was normally used for spacing between characters. However, in line graphics mode, characters 0xC0 through 0xDF repeated the 8th column in the position of the 9th, allowing the continuous line-drawing characters we’re used to from TUIs.

Problems rendering DOS fonts on modern systems

By now, you’re probably seeing some of the issues we have rendering DOS screens on more modern systems. These aren’t new at all; I remember some of these from back in the days when I ran OS/2, and I think also saw them on various terminals and consoles in OS/2 and Windows.

Some issues you’d encounter would be:

- Incorrect aspect ratio caused by using the original font and rendering it using 1:1 square pixels (resulting in a squashed appearance)

- Incorrect aspect ratio for ANOTHER reason, caused by failing to render column 9, resulting in text that is overall too narrow

- Characters appearing to be touching each other when they shouldn’t (failing to render column 9; looking at you, dosbox)

- Gaps between line drawing characters that should be continuous, caused by rendering column 9 as empty space in all cases

Character set issues

DOS was around long before Unicode was. In the DOS world, there were codepages that selected the glyphs for roughly the high half of the 256 possible characters. CP437 was the standard for the USA; others existed for other locations that needed different characters. On Unix, the USA pre-Unicode standard was Latin-1. Same concept, but with different character mappings.

Nowadays, just about everything is based on UTF-8. So, we need some way to map our CP437 glyphs into Unicode space. If we are displaying DOS-based content, we’ll also need a way to map CP437 characters to Unicode for display later, and we need these maps to match so that everything comes out right. Whew.

So, let’s get on with setting this up!

Selecting the proper video mode

As explained in my previous post, proper hardware support for DOS text mode is limited to x86 machines that do not use UEFI. Non-x86 machines, or x86 machines with UEFI, simply do not contain the necessary support for it. As these are now standard, most of the time, the text console you see on Linux is actually the kernel driving the video hardware in graphics mode, and doing the text rendering in software.

That’s all well and good, but it makes it quite difficult to actually get an 80x25 console.

First, we need to be running at 720x400. This is where I ran into difficulty last time. I realized that my laptop’s LCD didn’t advertise any video modes other than its own native resolution. However, almost all external monitors will, and 720x400@70 is a standard VGA mode from way back, so it should be well-supported.

You need to find the Linux device name for your device. You can look at the possible devices with ls -l /sys/class/drm. If you also have a GUI, xrandr may help too. But in any case, each directory under /sys/class/drm has a file named modes, and if you cat them all, you will eventually come across one with a bunch of modes defined. Drop the leading “card0” or whatever from the directory name, and that’s your device. (Verify that 720x400 is in modes while you’re at it.)

Now, you’re going to edit /etc/default/grub and add something like this to GRUB_CMDLINE_LINUX_DEFAULT:

video=DP-1:720x400@70

Of course, replace DP-1 with whatever your device is.

Now you can run update-grub and reboot. You should have a 720x400 display.

At first, I thought I had succeeded by using Linux’s built-in VGA font with that mode. But it looked too tall. After noticing that repeated 0s were touching, I got suspicious about the missing 9th column in the cells. stty -a showed that my screen was 90x25, which is exactly what it would show if I was using 8x16 instead of 9x16 cells. Sooo…. I need to prepare a 9x16 font.

Preparing a font

Here’s where it gets complicated.

I’ll give you the simple version and the hard mode.

The simple mode is this: Download https://www.complete.org/downloads/CP437-VGA.psf.gz and stick it in /usr/local/etc, then skip to the “Activating the font” section below.

The font assembled here is based on the Ultimate Oldschool PC Font Pack v2.2, which is (c) 2016-2020 VileR and licensed under Creative Commons Attribution-ShareAlike 4.0 International License. My psf file is derived from this using the instructions below.

Building it yourself

First, install some necessary software: apt-get install fontforge bdf2psf

Start by going to the Oldschool PC Font Pack Download page. Download oldschool_pc_font_pack_v2.2_FULL.zip and unpack it.

The file we’re interested in is otb - Bm (linux bitmap)/Bm437_IBM_VGA_9x16.otb. Open it in fontforge by running fontforge BmPlus_IBM_VGA_9x16.otb. When it asks if you will load the bitmap fonts, hit select all, then yes. Go to File -> generate fonts. Save in a BDF, no need for outlines, and use “guess” for resolution.

Now you have a file such as Bm437_IBM_VGA_9x16-16.bdf. Excellent.

Now we need to generate a Unicode map file. We will make sure this matches the system’s by enumerating every character from 0x00 to 0xFF, converting it from CP437 to Unicode, and writing the appropriate map.

Here’s a Python script to do that:

for i in range(0, 256):

cp437b = b'%c' % i

uni = ord(cp437b.decode('cp437'))

print(f"U+{uni:04x}")

Save that file as genmap.py and run python3 genmap.py > cp437-uni.

Now, we’re ready to build the psf file:

bdf2psf --fb Bm437_IBM_VGA_9x16-16.bdf \

/dev/null cp437-uni 256 CP437-VGA.psf

By convention, we normally store these files gzipped, so gzip CP437-VGA.psf.

You can test it on the console with setfont CP437-VGA.psf.gz.

Now copy this file into /usr/local/etc.

Activating the font

Now, edit /etc/default/console-setup. It should look like this:

# CONFIGURATION FILE FOR SETUPCON

# Consult the console-setup(5) manual page.

ACTIVE_CONSOLES="/dev/tty[1-6]"

CHARMAP="UTF-8"

CODESET="Lat15"

FONTFACE="VGA"

FONTSIZE="8x16"

FONT=/usr/local/etc/CP437-VGA.psf.gz

VIDEOMODE=

# The following is an example how to use a braille font

# FONT='lat9w-08.psf.gz brl-8x8.psf'

At this point, you should be able to reboot. You should have a proper 80x25 display! Log in and run stty -a to verify it is indeed 80x25.

Using and testing CP437

Part of the point of CP437 is to be able to access BBSs, ANSI art, and similar.

Now, remember, the Linux console is still in UTF-8 mode, so we have to translate CP437 to UTF-8, then let our font map translate it back to CP437. A weird trip, but it works.

Let’s test it using the Textfiles ANSI art collection. In the artworks section, I randomly grabbed a file near the top: borgman.ans. Download that, and display with:

clear; iconv -f CP437 -t UTF-8 < borgman.ans

You should see something similar to – but actually more accurate than – the textfiles PNG rendering of it, which you’ll note has an incorrect aspect ratio and some rendering issues. I spot-checked with a few others and they seemed to look good. belinda.ans in particular tries quite a few characters and should give you a good sense if it is working.

Use with interactive programs

That’s all well and good, but you’re probably going to want to actually use this with some interactive program that expects CP437. Maybe Minicom, Kermit, or even just telnet?

For this, you’ll want to apt-get install luit. luit maps CP437 (or any other encoding) to UTF-8 for display, and then of course the Linux console maps UTF-8 back to the CP437 font.

Here’s a way you can repeat the earlier experiment using luit to run the cat program:

clear; luit -encoding CP437 cat borgman.ans

You can run any command under luit. You can even run luit -encoding CP437 bash if you like. If you do this, it is probably a good idea to follow my instructions on generating locales on my post on serial terminals, and then within luit, set LANG=en_us.IBM437. But note especially that you can run programs like minicom and others for accessing BBSs under luit.

Final words

This gave you a nice DOS-type console. Although it doesn’t have glyphs for many codepoints, it does run in UTF-8 mode and therefore is compatible with modern software.

You can achieve greater compatibility with more UTF-8 codepoints with the DOS font, at the expense of accuracy of character rendering (especially for the double-line drawing characters) by using /usr/share/bdf2psf/standard.equivalents instead of /dev/null in the bdf2psf command.

Or you could go for another challenge, such as using the DEC vt-series fonts for coverage of ISO-8859-1. But just using fonts extracted from DEC ROM won’t work properly, because DEC terminals had even more strangeness going on than DOS fonts.

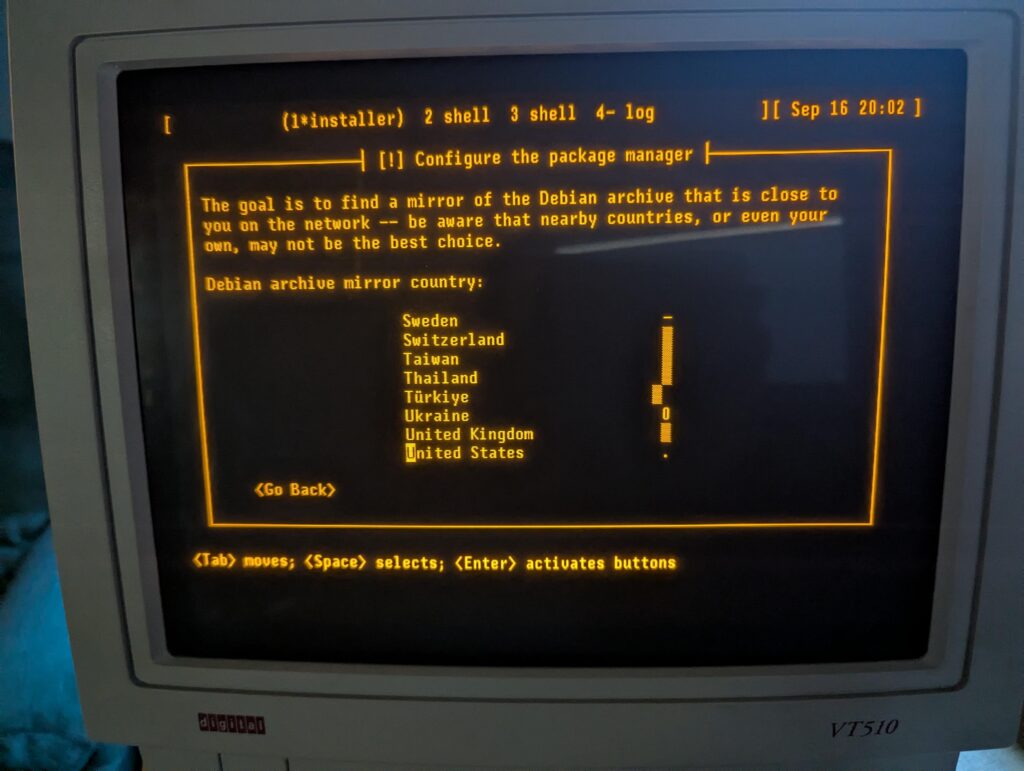

Installing and Using Debian With My Decades-Old Genuine DEC vt510 Serial Terminal 17 Sep 4:49 AM (last month)

Six years ago, I was inspired to buy a DEC serial terminal. Since then, my collection has grown to include several DEC models, an IBM 3151, a Wyse WY-55, a Televideo 990, and a few others.

When you are running a terminal program on Linux or MacOS, what you are really running is a terminal emulator. In almost all cases, the terminal emulator is emulating one of the DEC terminals in the vt100 through vt520 line, which themselves use a command set based on an ANSI standard.

In short, you spend all day using a program designed to pretend to be the exact kind of physical machine I’m using for this experiment!

I have long used my terminals connected to a Raspberry Pi 4, but due to the difficulty of entering a root filesystem encryption password using a serial console on a Raspberry Pi, I am switching to an x86 Mini PC (with a N100 CPU).

While I have used a terminal with the Pi, I’ve never before used it as a serial console all the way from early boot, and I have never installed Debian using the terminal to run the installer. A serial terminal gives you a login prompt. A serial console gives you access to kernel messages, the initrd environment, and sometimes even the bootloader.

This might be fun, I thought.

I selected one of my vt510 terminals for this. It is one of my newer ones, having been built in 1993. But it has a key feature: I can remap Ctrl to be at the caps lock position, something I do on every other system I use anyhow. I could have easily selected an older one from the 1980s.

Kernel configuration

To enable a serial console for Linux, you need to pass a parameter on the kernel command line. See the kernel documentaiton for more. I very frequently see instructions that are incomplete; they particularly omit flow control, which is most definitely needed for these real serial terminals.

I run my terminal at 57600 bps, so the parameter I need is console=ttyS0,57600n8r. The “r” means to use hardware flow control (ttyS0 corresponds to the first serial port on the system; use ttyS1 or something else as appropriate for your situation). While booting the Debian installer, according to Debian’s instructions, it may be useful to also add TERM=vt102 (the installer doesn’t support the vt510 terminal type directly). The TERM parameter should not be specified on a running system after instlalation.

Booting the Debian installer

When you start the Debian installer, to get it into serial mode, you have a couple of options:

- You can use a traditional display and keyboard just long enough to input the kernel parameters described above

- You can edit the bootloader configuration on the installer’s filesystem prior to booting from it

Option 1 is pretty easy. Option 2 is hard mode, but not that bad.

On x86, the Debian installer boots in at least two different ways: it uses GRUB if you’re booting under UEFI (which is most systems these days), or ISOLINUX if you are booting from the BIOS.

If using GRUB, the file to edit on the installer image is boot/grub/grub.cfg.

Near the top, add these lines:

serial --unit=0 --speed=57600 --word=8 --parity=no --stop=1 terminal_input console serial terminal_output console serial

Unit 0 corresponds to ttyS0 as above.

GRUB’s serial command does not support flow control. If your terminal gets corrupted during the GRUB stage, you may need to configure it to a slower speed.

Then, find the “linux” line under the “Install” menuentry. Edit it to insert console=ttyS0,57600n8r TERM=vt102 right after the vga=788.

Save, unmount, and boot. You should see the GRUB screen displayed on your serial terminal. Select the Install option and the installer begins.

If you are using BIOS boot, I’m sure you can do something similar with the files in the isolinux directory, but haven’t researched it.

Now, you can install Debian like usual!

Configuring the System

I was pleasantly surprised to find that Debian’s installer took care of many, but not all, of the things I want to do in order to make the system work nicely with a serial terminal. You can perform these steps from a chroot under the installer environment before a reboot, or later in the running system.

First, while Debian does set up a getty (the program that displays the login prompt) on the serial console by default, it doesn’t enable hardware flow control. So let’s do that.

Configuring the System: agetty with systemd

Run systemctl edit serial-getty@ttyS0.service. This opens an editor that lets you customize the systemd configuration for a given service without having to edit the file directly. All you really need to do is modify the agetty command, so we just override it. At the top, in the designated area, write:

[Service] ExecStart= ExecStart=-/sbin/agetty --wait-cr -8 -h -L=always %I 57600 vt510

The empty ExecStart= line is necessary to tell systemd to remove the existing ExecStart command (otherwise, it will logically contain two ExecStart lines, which is an error).

These arguments say:

- –wait-cr means to wait for the user to press Return at the terminal before attempting to display the login prompt

- -8 tells it to assume 8-bit mode on the serial line

- -h enables hardware flow control

- -L=always enables local line mode, disabling monitoring of modem control lines

- %I substitutes the name of the port from systemd

- 57600 gives the desired speed, and vt510 gives the desired setting for the TERM environment variable

The systemd documentation refers to this page about serial consoles, which gives more background. However, I think it is better to use the systemctl edit method described here, rather than just copying the config file, since this lets things like new configurations with new Debian versions take effect.

Configuring the System: Kernel and GRUB

Your next stop is the /etc/default/grub file. Debian’s installer automatically makes some changes here. There are three lines you want to change. First, near the top, edit GRUB_CMDLINE_LINUX_DEFAULT and add console=tty0 console=ttyS0,57600n8r. By specifying console twice, you allow output to go both to the standard display and to the serial console. By specifying the serial console last, you make it be the preferred one for things like entering the root filesystem password.

Next, towards the bottom, make sure these two lines look like this:

GRUB_TERMINAL="console serial" GRUB_SERIAL_COMMAND="serial --unit=0 --speed=57600 --word=8 --parity=no --stop=1"

Finally, near the top, you may want to raise the GRUB_TIMEOUT to somewhere around 10 to 20 seconds since things may be a bit slower than you’re used to.

Save the file and run update-grub.

Now, GRUB will display on both your standard display and the serial console. You can edit the boot command from either. If you have a VGA or HDMI monitor attached, for instance, and need to not use the serial console, you can just edit the Linux command line in GRUB and remove the reference to ttyS0 for one boot. Easy!

That’s it. You now have a system that is fully operational from a serial terminal.

My original article from 2019 has some additional hints, including on how to convert from UTF-8 for these terminals.

Update 2025-09-17: It is also useful to set up proper locales. To do this, first edit /etc/locale.gen. Make sure to add, or uncomment:

en_US ISO-8859-1 en_US.IBM437 IBM437 en_US.UTF-8 UTF-8

Then run locale-gen. Normally, your LANG will be set to en_us.UTF-8, which will select the appropriate encoding. Plain en_US will select ISO-8859-1, which you need for the vt510. Then, add something like this to your ~/.bashrc:

if [ `tty` = "/dev/ttyS0" -o "$TERM" = "vt510" ]; then

stty -iutf8

# might add ixon ixoff

export LANG=en_US

export MANOPT="-E ascii"

stty rows 25

fi

if [ "$TERM" = "screen" -o "$TERM" = "vt100" ]; then

export LANG=en_US.utf8

fi

Finally, in my ~/.screenrc, I have this. It lets screen convert between UTF-8 and ISO-8859-1:

defencoding UTF-8 startup_message off vbell off termcapinfo * XC=B%,‐-, maptimeout 5 bindkey -k ku stuff ^[OA bindkey -k kd stuff ^[OB bindkey -k kr stuff ^[OC bindkey -k kl stuff ^[OD

I just want an 80×25 console, but that’s no longer possible 15 Sep 5:53 PM (last month)

Update 2025-09-18: I figured out how to do this, at least for many non-laptop screens. This post still contains a lot of good background detail, however.

Somehow along the way, a feature that I’ve had across DOS, OS/2, FreeBSD, and Linux — and has been present on PCs for more than 40 years — is gone.

That feature, of course, is the 80×25 text console.

Linux has, for awhile now, rendered its text console using graphic modes. You can read all about it here. This has been necessary because only PCs really had the 80×25 text mode (Raspberry Pis, for instance, never did), and even they don’t have it when booted with UEFI.

I’ve lately been annoyed that:

- The console is a different size on every screen — both in terms of size of letters and the dimensions of it

- If a given machine has more than one display, one or both of them will have parts of the console chopped off

- My system seems to run with three different resolutions or fonts at different points of the boot process. One during the initrd, and two different ones during the remaining boot.

And, I wanted to run some software on the console that was designed with 80×25 in mind. And I’d like to be able to plug in an old VGA monitor and have it just work if I want to do that.

That shouldn’t be so hard, right? Well, the old vga= option that you are used to doesn’t work when you booted from UEFI or on non-x86 platforms. Most of the tricks you see online for changing resolutions, etc., are no longer relevant. And things like setting a resolution with GRUB are useless for systems that don’t use GRUB (including ARM).

VGA text mode uses 8×16 glyphs in 9×16 cells, where the pixels are non-square, giving a native resolution of 720×400 (which historically ran at 70Hz), which should have streched pixels to make a 4:3 image.

While it is possible to select a console font, and 8×16 fonts are present and supported in Linux, it appears to be impossible to have a standard way to set 720×400 so that they present in a reasonable size, at the correct aspect ratio, with 80×25.

Tricks like nomodeset no longer work on UEFI or ARM systems. It’s possible that kmscon or something like it may help, but I’m not even certain of that (video=eDP1:720×400 produced an error saying that 720×400 wasn’t a supported mode, so I’m unsure kmscon would be any better.) Not that it matters; all the kmscon options to select a font or zoom are broken, and it doesn’t offer mode selection anyhow.

I think I’m going to have to track down an old machine.

Sigh.

Performant Full-Disk Encryption on a Raspberry Pi, but Foiled by Twisty UARTs 11 Sep 5:41 AM (last month)

In my post yesterday, ARM is great, ARM is terrible (and so is RISC-V), I described my desire to find ARM hardware with AES instructions to support full-disk encryption, and the poor state of the OS ecosystem around the newer ARM boards.

I was anticipating buying either a newer ARM SBC or an x86 mini PC of some sort.

More-efficient AES alternatives

Always one to think, “what if I didn’t have to actually buy something”, I decided to research whether it was possible to use encryption algorithms that are more performant on the Raspberry Pi 4 I already have.

The answer was yes. From cryptsetup benchmark:

root@mccoy:~# cryptsetup benchmark --cipher=xchacha12,aes-adiantum-plain64

# Tests are approximate using memory only (no storage IO).

# Algorithm | Key | Encryption | Decryption

xchacha12,aes-adiantum 256b 159.7 MiB/s 160.0 MiB/s

xchacha20,aes-adiantum 256b 116.7 MiB/s 169.1 MiB/s

aes-xts 256b 52.5 MiB/s 52.6 MiB/s

With best-case reads from my SD card at 45MB/s (with dd if=/dev/mmcblk0 of=/dev/null bs=1048576 status=progress), either of the ChaCha-based algorithms will be fast enough. “Great,” I thought. “Now I can just solve this problem without spending a dollar.”

But not so fast.

Serial terminals vs. serial consoles

My primary use case for this device is to drive my actual old DEC vt510 terminal. I have long been able to do that by running a getty for my FTDI-based USB-to-serial converter on /dev/ttyUSB0. This gets me a login prompt, and I can do whatever I need from there.

This does not get me a serial console, however. The serial console would show kernel messages and could be used to interact with the pre-multiuser stages of the system — that is, everything before the loging prompt. You can use it to access an emergency shell for repair, etc.

Although I have long booted that kernel with console=tty0 console=ttyUSB0,57600, the serial console has never worked but I’d never bothered investigating because the text terminal was sufficient.

You might be seeing where this is going: to have root on an encrypted LUKS volume, you have to enter the decryption password in the pre-multiuser environment (which happens to be on the initramfs).

So I started looking. First, I extracted the initrd with cpio and noticed that the ftdi_sio and usbserial modules weren’t present. Added them to /etc/initramfs-tools/modules and rebooted; no better.

So I found the kernel’s serial console guide, which explicitly notes “To use a serial port as console you need to compile the support into your kernel”. Well, I have no desire to custom-build a kernel on a Raspberry Pi with MicroSD storage every time a new kernel comes out.

I thought — well I don’t stricly need the kernel to know about the console on /dev/ttyUSB0 for this; I just need the password prompt — which comes from userspace — to know about it.

So I looked at the initramfs code, and wouldn’t you know it, it uses /dev/console. Looking at /proc/consoles on that system, indeed it doesn’t show ttyUSB0. So even though it is possible to load the USB serial driver in the initramfs, there is no way to make the initramfs use it, because it only uses whatever the kernel recognizes as a console, and the kernel won’t recognize this. So there is no way to use a USB-to-serial adapter to enter a password for an encrypted root filesystem.

Drat.

The on-board UARTs?

I can hear you know: “The Pi already has on-board serial support! Why not use that?”

Ah yes, the reason I don’t want to use that is because it is difficult to use that, particularly if you want to have RTS/CTS hardware flow control (or DTR/DSR on these old terminals, but that’s another story, and I built a custom cable to map it to RTS/CTS anyhow).

Since you asked, I’ll take you down this unpleasant path.

The GPIO typically has only 2 pins for serial communication: 8 and 10, for TX and RX, respectively.

But dive in and you get into a confusing maze of UARTs. The “mini UART”, the one we are mostly familiar with on the Pi, does not support hardware flow control. The PL011 does. So the natural question is: how do we switch to the PL011, and what pins does it use? Great questions, and the answer is undocumented, at least for the Pi 4.

According to that page, for the Pi 4, the primary UART is UART1, UART1 is the mini UART, “the secondary UART is not normally present on the GPIO connector” and might be used by Bluetooth anyway, and there is no documented pin for RTS/CTS anyhow. (Let alone some of the other lines modems use) There are supposed to be /dev/ttyAMA* devices, but I don’t have those. There’s an enable_uart kernel parameter, which does things like stop the mini UART from changing baud rates every time the VPU changes clock frequency (I am not making this up!), but doesn’t seem to control the PL011 UART selection. This page has a program to do it, and map some GPIO pins to RTS/CTS, in theory.

Even if you get all that working, you still have the problem that the Pi UARTs (all of them of every type) is 3.3V and RS-232 is 5V, so unless you get a converter, you will fry your Pi the moment you connect it to something useful. So, you’re probably looking at some soldering and such just to build a cable that will work with an iffy stack.

So, I could probably make it work given enough time, but I don’t have that time to spare working with weird Pi serial problems, so I have always used USB converters when I need serial from a Pi.

Conclusion

I bought a fanless x86 micro PC with a N100 chip and all the ports I might want: a couple of DB-9 serial ports, some Ethernet ports, HDMI and VGA ports, and built-in wifi. Done.

ARM is great, ARM is terrible (and so is RISC-V) 10 Sep 5:16 AM (last month)

I’ve long been interested in new and different platforms. I ran Debian on an Alpha back in the late 1990s and was part of the Alpha port team; then I helped bootstrap Debian on amd64. I’ve got somewhere around 8 Raspberry Pi devices in active use right now, and the free NNCPNET Internet email service I manage runs on an ARM instance at a cloud provider.

ARM-based devices are cheap in a lot of ways: they use little power and there are many single-board computers based on them that are inexpensive. My 8-year-old’s computer is a Raspberry Pi 400, in fact.

So I like ARM.

I’ve been looking for ARM devices that have accelerated AES (Raspberry Pi 4 doesn’t) so I can use full-disk encryption with them. There are a number of options, since ARM devices are starting to go more mid-range. Radxa’s ROCK 5 series of SBCs goes up to 32GB RAM. The Orange Pi 5 Max and Ultra have up to 16GB RAM, as does the Raspberry Pi 5. Pine64’s Quartz64 has up to 8GB of RAM. I believe all of these have the ARM cryptographic extensions. They’re all small and most are economical.

But I also dislike ARM. There is a terrible lack of standardization in the ARM community. They say their devices run Linux, but the default there is that every vendor has their own custom Debian fork, and quite likely kernel fork as well. Most don’t maintain them very well.

Imagine if you were buying x86 hardware. You might have to manage AcerOS, Dellbian, HPian, etc. Most of them have no security support (particularly for the kernel). Some are based on Debian 11 (released in 2021), some Debian 12 (released in 2023), and none on Debian 13 (released a month ago).

That is exactly the situation we have on ARM. While Raspberry Pi 4 and below can run Debian trixie directly, Raspberry Pi has not bothered to upstream support for the Pi 5 yet, and Raspberry Pi OS is only based on Debian bookworm (released in 2023) and very explicitly does not support a key Debian feature: you can’t upgrade from one Raspberry Pi OS release to the next, so it’s a complete reinstall every 2 years instead of just an upgrade. OrangePiOS only supports Debian bookworm — but notably, their kernel is mostly stuck at 5.10 for every image they have (bookworm shipped with 6.1 and bookworm-backports supports 6.12).

Radxa has a page on running Debian on one specific board, they seem to actually not support Debian directly, but rather their fork Radxa OS. There’s a different installer for every board; for instance, this one for the Rock 4D. Looking at it, I can see that it uses files from here and here, with custom kernel, gstreamer, u-boot, and they put zfs in main for some reason.

From Pine64, the Quartz64 seems to be based on an ancient 4.6 or 4.19 kernel. Perhaps, though, one might be able to use Debian’s Pine A64+ instructions on it. Trixie doesn’t have a u-boot image for the Quartz64 but it does have device tree files for it.

RISC-V seems to be even worse; not only do we have this same issue there, but support in trixie is more limited and so is performance among the supported boards.

The alternative is x86-based mini PCs. There are a bunch based on the N100, N150, or Celeron. Many of them support AES-NI and the prices are roughly in line with the higher-end ARM units. There are some interesting items out there; for instance, the Radxa X4 SBC features both an N100 and a RP2040. Fanless mini PCs are available from a number of vendors. Companies like ZimaBoard have interesting options like the ZimaBlade also.

The difference in power is becoming less significant; it seems the newer ARM boards need 20W or 30W power supplies, and that may put them in the range of the mini PCs. As for cost, the newer ARM boards need a heat sink and fan, so by the time you add SBC, fan, storage, etc. you’re starting to get into the price range of the mini PCs.

It is great to see all the options of small SBCs with ARM and RISC-V processors, but at some point you’ve got to throw up your hands and go “this ecosystem has a lot of problems” and consider just going back to x86. I’m not sure if I’m quite there yet, but I’m getting close.

Update 2025-09-11: I found a performant encryption option for the Pi 4, but was stymied by serial console problems; see the update post.

btrfs on a Raspberry Pi 9 Sep 6:01 AM (last month)

I’m something of a filesystem geek, I guess. I first wrote about ZFS on Linux 14 years ago, and even before I used ZFS, I had used ext2/3/4, jfs, reiserfs, xfs, and no doubt some others.

I’ve also used btrfs. I last posted about it in 2014, when I noted it has some advantages over ZFS, but also some drawbacks, including a lot of kernel panics.

Since that comparison, ZFS has gained trim support and btrfs has stabilized. The btrfs status page gives you an accurate idea of what is good to use on btrfs.

Background: Moving towards ZFS and btrfs

I have been trying to move everything away from ext4 and onto either ZFS or btrfs. There are generally several reasons for that:

- The checksums for every block help detect potential silent data corruption

- Instant snapshots make consistent backups of live systems a lot easier, and without the hassle and wasted space of LVM snapshots

- Transparent compression and dedup can save a lot of space in storage-constrained environments

For any machine with at least 32GB of RAM (plus my backup server, which has only 8GB), I run ZFS. While it lacks some of the flexibility of btrfs, it has polish. zfs list -o space shows a useful space accounting. zvols can be behind VMs. With my project simplesnap, I can easily send hourly backups with ZFS, and I choose to send them over NNCP in most cases.

I have a few VMs in the cloud (running Debian, of course) that I use to host things like this blog, my website, my gopher site, the quux NNCP public relay, and various other things.

In these environments, storage space can be expensive. For that matter, so can RAM. ZFS is RAM-hungry, so that rules out ZFS. I’ve been running btrfs in those environments for a few years now, and it’s worked out well. I do async dedup, lzo or zstd compression depending on the needs, and the occasional balance and defrag.

Filesystems on the Raspberry Pi

I run Debian trixie on all my Raspberry Pis; not Raspbian or Raspberry Pi OS for a number of reasons. My 8-yr-old uses a Raspberry Pi 400 as her primary computer — and loves it! She doesn’t do web browsing, but plays Tuxpaint, some old DOS games like Math Blaster via dosbox, and uses Thunderbird for a locked-down email account.

But it was SLOW. Just really, glacially, slow, especially for Thunderbird.

My first step to address that was to get a faster MicroSD card to hold the OS. That was a dramatic improvement. It’s still slow, but a lot faster.

Then, I thought, maybe I could use btrfs with LZO compression to reduce the amount of I/O and speed things up further? Analysis showed things were mostly slow due to I/O, not CPU, constraints.

The conversion

Rather than use the btrfs in-place conversion from ext4, I opted to dar it up (like tar), run mkfs.btrfs on the SD card, then unpack the archive back onto it. Easy enough, right?

Well, not so fast. The MicroSD card is 128GB, and the entire filesystem is 6.2GB. But after unpacking 100MB onto it, I got an out of space error.

btrfs has this notion of block groups. By default, each block group is dedicated to either data or metadata. btrfs fi df and btrfs fi usage will show you details about the block groups.

btrfs allocates block groups greedily (the ssd_spread mount option I use may have exacerbated this). What happened was it allocated almost the entire drive to data block groups, trying to spread the data across it. It so happened that dar archived some larger files first (maybe /boot), so btrfs was allocating data and metadata blockgroups assuming few large files. But then it started unpacking one of the directories in /usr with lots of small files (maybe /usr/share/locale). It quickly filled up the metadata block group, and since the entire SD card had been allocated to different block groups, I got ENOSPC.

Deleting a few files and running btrfs balance resolved it; now it allocated 1GB to metadata, which was plenty. I re-ran the dar extract and now everything was fine. See more details on btrfs balance and block groups.

This was the only btrfs problem I encountered.

Benchmarks

I timed two things prior to switching to btrfs: how long it takes to boot (measured from the moment I turn on the power until the moment the XFCE login box is displayed), and how long it takes to start Thunderbird.

After switching to btrfs with LZO compression, somewhat to my surprise, both measures were exactly the same!

Why might this be?

It turns out that SD cards are understood to be pathologically bad with random read performance. Boot and Thunderbird both are likely doing a lot of small random reads, not large streaming reads. Therefore, it may be that even though I have reduced the total I/O needed, the impact is unsubstantial because the real bottleneck is the “seeks” across the disk.

Still, I gain the better backup support and silent data corruption prevention, so I kept btrfs.

SSD mount options and MicroSD endurance

btrfs has several mount options specifically relevant to SSDs. Aside from the obvious trim support, they are ssd and ssd_spread. The documentation on this is vague and my attempts to learn more about it found a lot of information that was outdated or unsubstantiated folklore.

Some reports suggest that “older” SSDs will benefit from ssd_spread, but that it may have no effect or even a harmful effect on newer ones, and can at times cause fragmentation or write amplification. I could find nothing to back this up, though. And it seems particularly difficult to figure out what kind of wear leveling SSD firmware does. MicroSD firmware is likely to be on the less-advanced side, but still, I have no idea what it might do. In any case, with btrfs not updating blocks in-place, it should be better than ext4 in the most naive case (no wear leveling at all) but may have somewhat more write traffic for the pathological worst case (frequent updates of small portions of large files).

One anecdotal report I read — and can’t find anymore, somehow — was from a person that had set up a sort of torture test for SD cards, with reports that ext4 lasted a few weeks or months before the MicroSDs failed, while btrfs lasted years.

If you are looking for a MicroSD card, by the way, The Great MicroSD Card Survey is a nice place to start.

For longevity: I mount all my filesystems with noatime already, so I continue to recommend that. You can also consider limiting the log size in /etc/systemd/journald.conf, running daily fstrim (which may be more successful than live trims in all filesystems).

Conclusion

I’ve been pretty pleased with btrfs. The concerns I have today relate to block groups and maintenance (periodic balance and maybe a periodic defrag). I’m not sure I’d be ready to say “put btrfs on the computer you send to someone that isn’t Linux-savvy” because the chances of running into issues are higher than with ext4. Still, for people that have some tech savvy, btrfs can improve reliability and performance in other ways.

Dreams of Late Summer 6 Sep 2:07 PM (last month)

Here on a summer night in the grass and lilac smell

Drunk on the crickets and the starry sky,

Oh what fine stories we could tell

With this moonlight to tell them by.A summer night, and you, and paradise,

So lovely and so filled with grace,

Above your head, the universe has hung its lights,

And I reach out my hand and touch your face.

I sit outside today, at the picnic table on our side porch. I was called out here; in late summer, the cicadas and insects of the plains are so loud that I can hear them from inside our old farmhouse.

I sit and hear the call and response of buzzing cicadas, the chirp of crickets during their intermission. The wind rustles off and on through the treetops. And now our old cat has heard me, and she comes over, spreading tan cat hair across my screen. But I don’t mind; I hear her purr as she comes over to relax nearby.

Aside from the gentle clack of my keyboard as I type, I hear no sounds of humans. Occasionally I hear the distant drone of a small piston airplane, and sometimes the faint horn of a train, 6 miles away.

As I look up, I see grass, the harvested wheat field, the trees, and our gravel driveway. Our road is on the other side of a hill. I see no evidence of it from here, but I know it’s there. Maybe 2 or 3 vehicles will pass on a day like today; if they’re tall delivery trucks, I’ll see their roof glide silently down the road, and know the road is there. The nearest paved road is several miles away, so not much comes out here.

I reflect of those times years ago, when this was grandpa’s house, and the family would gather on Easter. Grandpa hid not just Easter eggs, but Easter bags all over the yard. This yard. Here’s the tree that had a nice V-shaped spot to hide things in; there’s the other hiding spot.

I reflect on the wildlife. This afternoon, it’s the insects that I hear. On a foggy, cool, damp morning, the birds will be singing from all the trees, the fog enveloping me with unseen musical joy. On a quiet evening, the crickets chirp and the coyotes howl in the distance.

Now the old cat has found my lap. She sits there purring, tail swishing. 12 years ago when she was a kitten, our daughter hadn’t yet been born. She is old and limps, and is blind in one eye, but beloved by all. Perfectly content with life, she stretches and relaxes.

I have visited many wonderful cities in this world. I’ve seen Aida at the Metropolitan Opera, taken trains all over Europe, wandered the streets of San Francisco and Brussels and Lindos, visited the Christmas markets in the lightly-snowy evenings in Regensburg, felt the rumble of the Underground beneath me in London. But rarely do the city people come here.

Oh, some of them think they’ve visited the country. But no, my friends, no; if you don’t venture beyond the blacktop roads, you’ve not experienced it yet. You’ve not gone to a restaurant “in town”, recognized by several old friends. You’ve not stopped by the mechanic — the third generation of that family fixing cars that belong to yours — who more often than not tells you that you don’t need to fix that something just yet. You’ve not sat outside, in this land where regular people each live in their own quiet Central Park. You’ve not seen the sunset, with is majestic reds and oranges and purples and blues and grays, stretching across the giant iMax dome of the troposphere, suspended above the hills and trees to the west. You’ve not visited the grocery store, with your car unlocked and keys in the ignition, unconcerned about vehicle theft. You’ve not struggled with words when someone asks “what city are you from” and you lack the vocabulary to help them understand what it means when you say “none”.

Out there in the land of paved roads and bright lights, the problems of the world churn. The problems near and far: a physical and mental health challenges with people we know, global problems with politics and climate.

But here, this lazy summer afternoon, I forget about the land of the paved roads and bright lights. As it should be; they’ve forgotten the land of the buzzing cicadas and muddy roads.

I believe in impulse, in all that is green,

In the foolish vision that comes out true.

I believe that all that is essential is unseen,

And for this lifetime, I believe in you.All of the lovers and the love they made:

Nothing that was between them was a mistake.

All that we did for love’s sake,

Was not wasted and will never fade.All who have loved will be forever young

And walk in grandeur on a summer night

Along the avenue.They live in every song that is sung,

In every painting of pure light,

In every pas de deux.O love that shines from every star,

Love reflected in the silver moon;

It is not here, but it is not far.

Not yet, but it will be here soon.

No two days are alike. But this day comes whenever I pause to let it.

May you find the buzzing cicadas and muddy roads near you, wherever you may be.

Poetry from “A Summer Night” by Garrison Keillor

I Learned We All Have Linux Seats, and I’m Not Entirely Pleased 11 Jun 6:12 AM (4 months ago)

I recently wrote about How to Use SSH with FIDO2/U2F Security Keys, which I now use on almost all of my machines.

The last one that needed this was my Raspberry Pi hooked up to my DEC vt510 terminal and IBM mechanical keyboard. Yes I do still use that setup!

To my surprise, generating a key on it failed. I very quickly saw that /dev/hidraw0 had incorrect permissions, accessible only to root.

On other machines, it looks like this:

crw-rw----+ 1 root root 243, 16 May 24 16:47 /dev/hidraw16

And, if I run getfacl on it, I see:

# file: dev/hidraw16 # owner: root # group: root user::rw- user:jgoerzen:rw- group::--- mask::rw- other::---

Yes, something was setting an ACL on it. Thus began to saga to figure out what was doing that.

Firing up inotifywatch, I saw it was systemd-udevd or its udev-worker. But cranking up logging on that to maximum only showed me that uaccess was somehow doing this.

I started digging. uaccess turned out to be almost entirely undocumented. People say to use it, but there’s no description of what it does or how. Its purpose appears to be to grant access to devices to those logged in to a machine by dynamically adding them to ACLs for devices. OK, that’s a nice goal, but why was machine A doing this and not machine B?

I dug some more. I came across a hint that uaccess may only do that for a “seat”. A seat? I’ve not heard of that in Linux before.

Turns out there’s some information (older and newer) about this out there. Sure enough, on the machine with KDE, loginctl list-sessions shows me on seat0, but on the machine where I log in from ttyUSB0, it shows an empty seat.

But how to make myself part of the seat? I tried various udev rules to add the “seat” or “master-of-seat” tags, but nothing made any difference.

I finally gave up and did the old-fashioned rule to just make it work already:

TAG=="security-device",SUBSYSTEM=="hidraw",GROUP="mygroup"

I still don’t know how to teach logind to add a seat for ttyUSB0, but oh well. At least I learned something. An annoying something, but hey.

This all had a laudable goal, but when there are so many layers of indirection, poorly documented, with poor logging, it gets pretty annoying.

How to Use SSH with FIDO2/U2F Security Keys 17 May 4:53 AM (5 months ago)

For many years now, I’ve been using an old YubiKey along with the free tier of Duo Security to add a second factor to my SSH logins. This is klunky, and has a number of drawbacks (dependency on a cloud service and Internet among them).

I decided it was time to upgrade, so I recently bought a couple of YubiKey 5 series security keys. These support FIDO2/U2F, which make it so much easier to integrate with ssh.

But in researching how to do this, I found a lot of pages online with poor instructions. Either they didn’t explain what was going on very well, or suggested what I came to learn were insecure practices, or — most often — both.

It turns out this whole process is quite easy. But I wanted to understand how it worked.

So, I figured it out, set it up myself, and then put up a new, comprehensive page on my website: https://www.complete.org/easily-using-ssh-with-fido2-u2f-hardware-security-keys/. I hope it helps!

Memoirs of the Early Internet 26 Apr 10:22 AM (5 months ago)

The Internet is an amazing place, and occasionally you can find things on the web that have somehow lingered online for decades longer than you might expect.

Today I’ll take you on a tour of some parts of the early Internet.

The Internet, of course, is a “network of networks” and part of its early (and continuing) promise was to provide a common protocol that all sorts of networks can use to interoperate with each other. In the early days, UUCP was one of the main ways universities linked with each other, and eventually UUCP and the Internet sort of merged (but that’s a long story).

Let’s start with some Usenet maps, which were an early way to document the UUCP modem links between universities. Start with this PDF. The first page is a Usenet map (which at the time mostly flowed over UUCP) from April of 1981. Notice that ucbvax, a VAX system at Berkeley, was central to the map.

ucbvax continued to be a central node for UUCP for more than a decade; on page 5 of that PDF, you’ll see that it asks for a “Path from a major node (eg, ucbvax, devcax, harpo, duke)”. Pre-Internet email addresses used a path; eg, mark@ucbvax was duke!decvax!ucbvax!mark to someone. You had to specify the route from your system to the recipient on your email To line. If you gave out your email address on a business card, you would start it from a major node like ucbvax, and the assumption was that everyone would know how to get from their system to the major node.

On August 19, 1994, ucbvax was finally turned off. TCP/IP had driven UUCP into more obscurity; by then, it was mostly used by people without a dedicated Internet connection to get on the Internet, rather than an entire communication network of its own. A few days later, Cliff Frost posted a memoir of ucbvax; an obscurbe bit of Internet lore that is fun to read.

UUCP was ad-hoc, and by 1984 there was an effort to make a machine-parsable map to help automate routing on UUCP. This was called the pathalias project, and there was a paper about it. The Linux network administration guide even includes a section on pathalias.

Because UUCP mainly flowed over phone lines, long distance fees made it quite expensive. In 1985, the Stargate Project was formed, with the idea of distributing Usenet by satellite. The satellite link was short-lived, but the effort eventually morphed into UUNET. It was initially a non-profit, but eventually became a commercial backbone provider, and later ISP. Over a long series of acquisitions, UUNET is now part of Verizon. An article in ;login: is another description of this history.

IAPS has an Internet in 1990 article, which includes both pathalias data and an interesting map of domain names to UUCP paths.

As I was pondering what interesting things a person could do with NNCPNET Internet email, I stumbled across a page on getting FTP files via e-mail. Yes, that used to be a thing! I remember ftpmail@decwrl.dec.com.

It turns out that page is from a copy of EFF’s (Extended) Guide to the Internet from 1994. Wow, what a treasure! It has entries such as A Slice of Life in my Virtual Community, libraries with telnet access, Gopher, A Statement of Principle by Bruce Sterling, and I could go on. You can also get it as a PDF from Internet Archive.

UUCP is still included with modern Linux and BSD distributions. It was part of how I experienced the PC and Internet revolution in rural America. It lacks modern security, but NNCP is to UUCP what ssh is to telnet.

NNCPNET Can Optionally Exchange Internet Email 25 Apr 5:01 PM (6 months ago)

A few days ago, I announced NNCPNET, the email network based atop NNCP. NNCPNET lets anyone run a real mail server on a network that supports all sorts of topologies for transport, from Internet to USB drives. And verification is done at the NNCP protocol level, so a whole host of Internet email bolt-ons (SPF, DMARC, DKIM, etc.) are unnecessary.

Shortly after announcing NNCPNET, I added an Internet bridge. This lets you get your own DOMAIN.nncpnet.org domain, and from there route email to and from the Internet using a gateway node. Simple, effective, and a way to get real email to and from your laptop or Raspberry Pi without having to have a static IP, SPF, DMARC, DKIM, etc.

It’s a volunteer-run, free, service. Give it a try!

Announcing the NNCPNET Email Network 9 Apr 4:52 PM (6 months ago)

From 1995 to 2019, I ran my own mail server. It began with a UUCP link, an expensive long-distance call for me then. Later, I ran a mail server in my apartment, then ran it as a VPS at various places.

But running an email server got difficult. You can’t just run it on a residential IP. Now there’s SPF, DKIM, DMARC, and TLS to worry about. I recently reviewed mail hosting services, and don’t get me wrong: I still use one, and probably will, because things like email from my bank are critical.

But we’ve lost the ability to tinker, to experiment, to have fun with email.

Not anymore. NNCPNET is an email system that runs atop NNCP. I’ve written a lot about NNCP, including a less-ambitious article about point-to-point email over NNCP 5 years ago. NNCP is to UUCP what ssh is to telnet: a modernization, with modern security and features. NNCP is an asynchronous, onion-routed, store-and-forward network. It can use as a transport anything from the Internet to a USB stick.

NNCPNET is a set of standards, scripts, and tools to facilitate a broader email network using NNCP as the transport. You can read more about NNCPNET on its wiki!

The “easy mode” is to use the Docker container (multi-arch, so you can use it on your Raspberry Pi) I provide, which bundles:

- Exim mail server

- NNCP

- Verification and routing tools I wrote. Because NNCP packets are encrypted and signed, we get sender verification “for free”; my tools ensure the From: header corresponds with the sending node.

- Automated nodelist tools; it will request daily nodelist updates and update its configurations accordingly, so new members can be communicated with

- Integration with the optional, opt-in Internet email bridge

It is open to all. The homepage has a more extensive list of features.

I even have mailing lists running on NNCPNET; see the interesting addresses page for more details.

There is extensive documentation, and of course the source to the whole thing is available.

The gateway to Internet SMTP mail is off by default, but can easily be enabled for any node. It is a full participant, in both directions, with SPF, DKIM, DMARC, and TLS.

You don’t need any inbound ports for any of this. You don’t need an always-on Internet connection. You don’t even need an Internet connection at all. You can run it from your laptop and still use Thunderbird to talk to it via its optional built-in IMAP server.

Why You Should (Still) Use Signal As Much As Possible 27 Mar 6:51 PM (6 months ago)

As I write this in March 2025, there is a lot of confusion about Signal messenger due to the recent news of people using Signal in government, and subsequent leaks.

The short version is: there was no problem with Signal here. People were using it because they understood it to be secure, not the other way around.

Both the government and the Electronic Frontier Foundation recommend people use Signal. This is an unusual alliance, and in the case of the government, was prompted because it understood other countries had a persistent attack against American telephone companies and SMS traffic.

So let’s dive in. I’ll cover some basics of what security is, what happened in this situation, and why Signal is a good idea.

This post isn’t for programmers that work with cryptography every day. Rather, I hope it can make some of these concepts accessible to everyone else.

What makes communications secure?

When most people are talking about secure communications, they mean some combination of these properties:

- Privacy - nobody except the intended recipient can decode a message.

- Authentication - guarantees that the person you are chatting with really is the intended recipient.

- Ephemerality - preventing a record of the communication from being stored. That is, making it more like a conversation around the table than a written email.

- Anonymity - keeping your set of contacts to yourself and even obfuscating the fact that communications are occurring.

If you think about it, most people care the most about the first two. In fact, authentication is a key part of privacy. There is an attack known as man in the middle in which somebody pretends to be the intended recipient. The interceptor reads the messages, and then passes them on to the real intended recipient. So we can’t really have privacy without authentication.

I’ll have more to say about these later. For now, let’s discuss attack scenarios.

What compromises security?

There are a number of ways that security can be compromised. Let’s think through some of them:

Communications infrastructure snooping

Let’s say you used no encryption at all, and connected to public WiFi in a coffee shop to send your message. Who all could potentially see it?

- The owner of the coffee shop’s WiFi

- The coffee shop’s Internet provider

- The recipient’s Internet provider

- Any Internet providers along the network between the sender and the recipient

- Any government or institution that can compel any of the above to hand over copies of the traffic

- Any hackers that compromise any of the above systems

Back in the early days of the Internet, most traffic had no encryption. People were careful about putting their credit cards into webpages and emails because they knew it was easy to intercept them. We have been on a decades-long evolution towards more pervasive encryption, which is a good thing.

Text messages (SMS) follow a similar path to the above scenario, and are unencrypted. We know that all of the above are ways people’s texts can be compromised; for instance, governments can issue search warrants to obtain copies of texts, and China is believed to have a persistent hack into western telcos. SMS fails all four of our attributes of secure communication above (privacy, authentication, ephemerality, and anonymity).

Also, think about what information is collected from SMS and by who. Texts you send could be retained in your phone, the recipient’s phone, your phone company, their phone company, and so forth. They might also live in cloud backups of your devices. You only have control over your own phone’s retention.

So defenses against this involve things like:

- Strong end-to-end encryption, so no intermediate party – even the people that make the app – can snoop on it.

- Using strong authentication of your peers

- Taking steps to prevent even app developers from being able to see your contact list or communication history

You may see some other apps saying they use strong encryption or use the Signal protocol. But while they may do that for some or all of your message content, they may still upload your contact list, history, location, etc. to a central location where it is still vulnerable to these kinds of attacks.

When you think about anonymity, think about it like this: if you send a letter to a friend every week, every postal carrier that transports it – even if they never open it or attempt to peak inside – will be able to read the envelope and know that you communicate on a certain schedule with that friend. The same can be said of SMS, email, or most encrypted chat operators. Signal’s design prevents it from retaining even this information, though nation-states or ISPs might still be able to notice patterns (every time you send something via Signal, your contact receives something from Signal a few milliseconds later). It is very difficult to provide perfect anonymity from well-funded adversaries, even if you can provide very good privacy.

Device compromise

Let’s say you use an app with strong end-to-end encryption. This takes away some of the easiest ways someone could get to your messages. But it doesn’t take away all of them.

What if somebody stole your phone? Perhaps the phone has a password, but if an attacker pulled out the storage unit, could they access your messages without a password? Or maybe they somehow trick or compel you into revealing your password. Now what?

An even simpler attack doesn’t require them to steal your device at all. All they need is a few minutes with it to steal your SIM card. Now they can receive any texts sent to your number - whether from your bank or your friend. Yikes, right?

Signal stores your data in an encrypted form on your device. It can protect it in various ways. One of the most important protections is ephemerality - it can automatically delete your old texts. A text that is securely erased can never fall into the wrong hands if the device is compromised later.

An actively-compromised phone, though, could still give up secrets. For instance, what if a malicious keyboard app sent every keypress to an adversary? Signal is only as secure as the phone it runs on – but still, it protects against a wide variety of attacks.

Untrustworthy communication partner

Perhaps you are sending sensitive information to a contact, but that person doesn’t want to keep it in confidence. There is very little you can do about that technologically; with pretty much any tool out there, nothing stops them from taking a picture of your messages and handing the picture off.

Environmental compromise

Perhaps your device is secure, but a hidden camera still captures what’s on your screen. You can take some steps against things like this, of course.

Human error

Sometimes humans make mistakes. For instance, the reason a reporter got copies of messages recently was because a participant in a group chat accidentally added him (presumably that participant meant to add someone else and just selected the wrong name). Phishing attacks can trick people into revealing passwords or other sensitive data. Humans are, quite often, the weakest link in the chain.

Protecting yourself

So how can you protect yourself against these attacks? Let’s consider:

- Use a secure app like Signal that uses strong end-to-end encryption where even the provider can’t access your messages

- Keep your software and phone up-to-date

- Be careful about phishing attacks and who you add to chat rooms

- Be aware of your surroundings; don’t send sensitive messages where people might be looking over your shoulder with their eyes or cameras

There are other methods besides Signal. For instance, you could install GnuPG (GPG) on a laptop that has no WiFi card or any other way to connect it to the Internet. You could always type your messages on that laptop, encrypt them, copy the encrypted text to a floppy disk (or USB device), take that USB drive to your Internet computer, and send the encrypted message by email or something. It would be exceptionally difficult to break the privacy of messages in that case (though anonymity would be mostly lost). Even if someone got the password to your “secure” laptop, it wouldn’t do them any good unless they physically broke into your house or something. In some ways, it is probably safer than Signal. (For more on this, see my article How gapped is your air?)

But, that approach is hard to use. Many people aren’t familiar with GnuPG. You don’t have the convenience of sending a quick text message from anywhere. Security that is hard to use most often simply isn’t used. That is, you and your friends will probably just revert back to using insecure SMS instead of this GnuPG approach because SMS is so much easier.

Signal strikes a unique balance of providing very good security while also being practical, easy, and useful. For most people, it is the most secure option available.

Signal is also open source; you don’t have to trust that it is as secure as it says, because you can inspect it for yourself. Also, while it’s not federated, I previously addressed that.

Government use

If you are a government, particularly one that is highly consequential to the world, you can imagine that you are a huge target. Other nations are likely spending billions of dollars to compromise your communications. Signal itself might be secure, but if some other government can add spyware to your phones, or conduct a successful phishing attack, you can still have your communications compromised.

I have no direct knowledge, but I think it is generally understood that the US government maintains communications networks that are entirely separate from the Internet and can only be accessed from secure physical locations and secure rooms. These can be even more secure than the average person using Signal because they can protect against things like environmental compromise, human error, and so forth. The scandal in March of 2025 happened because government employees were using Signal rather than official government tools for sensitive information, had taken advantage of Signal’s ephemerality (laws require records to be kept), and through apparent human error had directly shared this information with a reporter. Presumably a reporter would have lacked access to the restricted communications networks in the first place, so that wouldn’t have been possible.

This doesn’t mean that Signal is bad. It just means that somebody that can spend billions of dollars on security can be more secure than you. Signal is still a great tool for people, and in many cases defeats even those that can spend lots of dollars trying to defeat it.

And remember - to use those restricted networks, you have to go to specific rooms in specific buildings. They are still not as convenient as what you carry around in your pocket.

Conclusion

Signal is practical security. Do you want phone companies reading your messages? How about Facebook or X? Have those companies demonstrated that they are completely trustworthy throughout their entire history?

I say no. So, go install Signal. It’s the best, most practical tool we have.

This post is also available on my website, where it may be periodically updated.

Censorship Is Complicated: What Internet History Says about Meta/Facebook 8 Jan 5:59 AM (9 months ago)

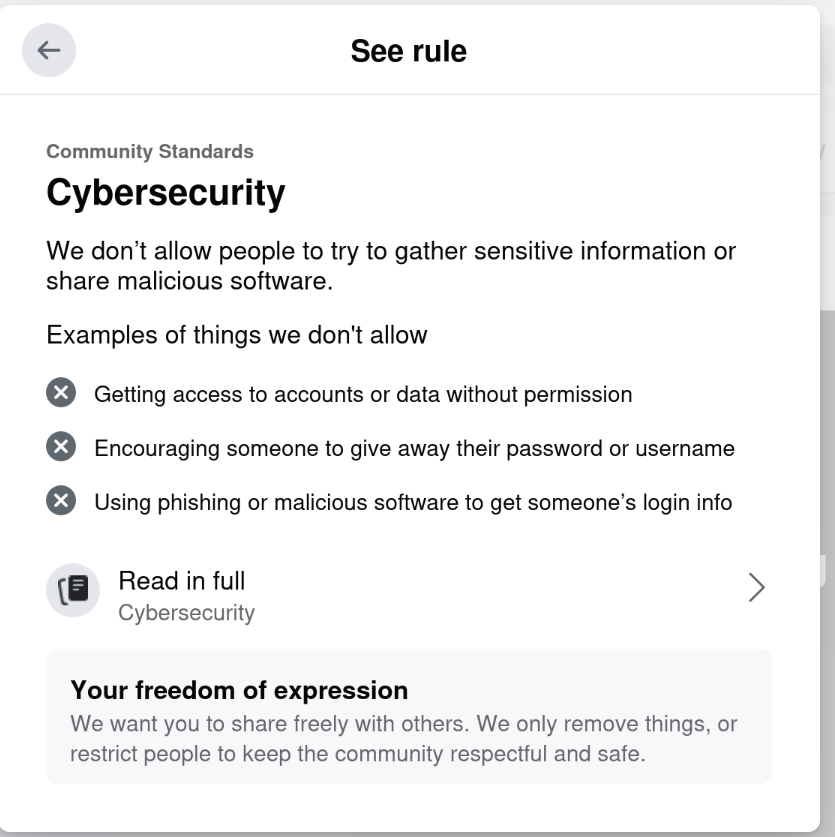

In light of this week’s announcement by Meta (Facebook, Instagram, Threads, etc), I have been pondering this question: Why am I, a person that has long been a staunch advocate of free speech and encryption, leery of sites that talk about being free speech-oriented? And, more to the point, why an I — a person that has been censored by Facebook for mentioning the Open Source social network Mastodon — not cheering a “lighter touch”?

The answers are complicated, and take me back to the early days of social networking. Yes, I mean the 1980s and 1990s.

Before digital communications, there were barriers to reaching a lot of people. Especially money. This led to a sort of self-censorship: it may be legal to write certain things, but would a newspaper publish a letter to the editor containing expletives? Probably not.

As digital communications started to happen, suddenly people could have their own communities. Not just free from the same kinds of monetary pressures, but free from outside oversight (parents, teachers, peers, community, etc.) When you have a community that the majority of people lack the equipment to access — and wouldn’t understand how to access even if they had the equipment — you have a place where self-expression can be unleashed.

And, as J. C. Herz covers in what is now an unintentional history (her book Surfing on the Internet was published in 1995), self-expression WAS unleashed. She enjoyed the wit and expression of everything from odd corners of Usenet to the text-based open world of MOOs and MUDs. She even talks about groups dedicated to insults (flaming) in positive terms.

But as I’ve seen time and again, if there are absolutely no rules, then whenever a group gets big enough — more than a few dozen people, say — there are troublemakers that ruin it for everyone. Maybe it’s trolling, maybe it’s vicious attacks, you name it — it will arrive and it will be poisonous.

I remember the debates within the Debian community about this. Debian is one of the pillars of the Internet today, a nonprofit project with free speech in its DNA. And yet there were inevitably the poisonous people. Debian took too long to learn that allowing those people to run rampant was causing more harm than good, because having a well-worn Delete key and a tolerance for insults became a requirement for being a Debian developer, and that drove away people that had no desire to deal with such things. (I should note that Debian strikes a much better balance today.)

But in reality, there were never absolutely no rules. If you joined a BBS, you used it at the whim of the owner (the “sysop” or system operator). The sysop may be a 16-yr-old running it from their bedroom, or a retired programmer, but in any case they were letting you use their resources for free and they could kick you off for any or no reason at all. So if you caused trouble, or perhaps insulted their cat, you’re banned. But, in all but the smallest towns, there were other options you could try.

On the other hand, sysops enjoyed having people call their BBSs and didn’t want to drive everyone off, so there was a natural balance at play. As networks like Fidonet developed, a sort of uneasy approach kicked in: don’t be excessively annoying, and don’t be easily annoyed. Like it or not, it seemed to generally work. A BBS that repeatedly failed to deal with troublemakers could risk removal from Fidonet.